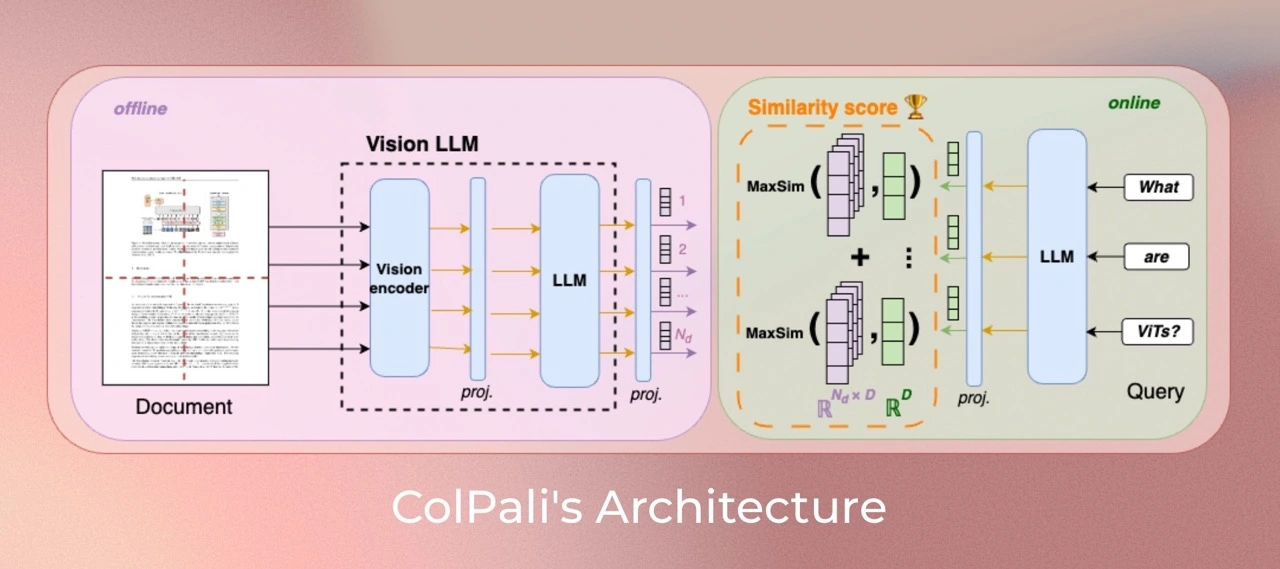

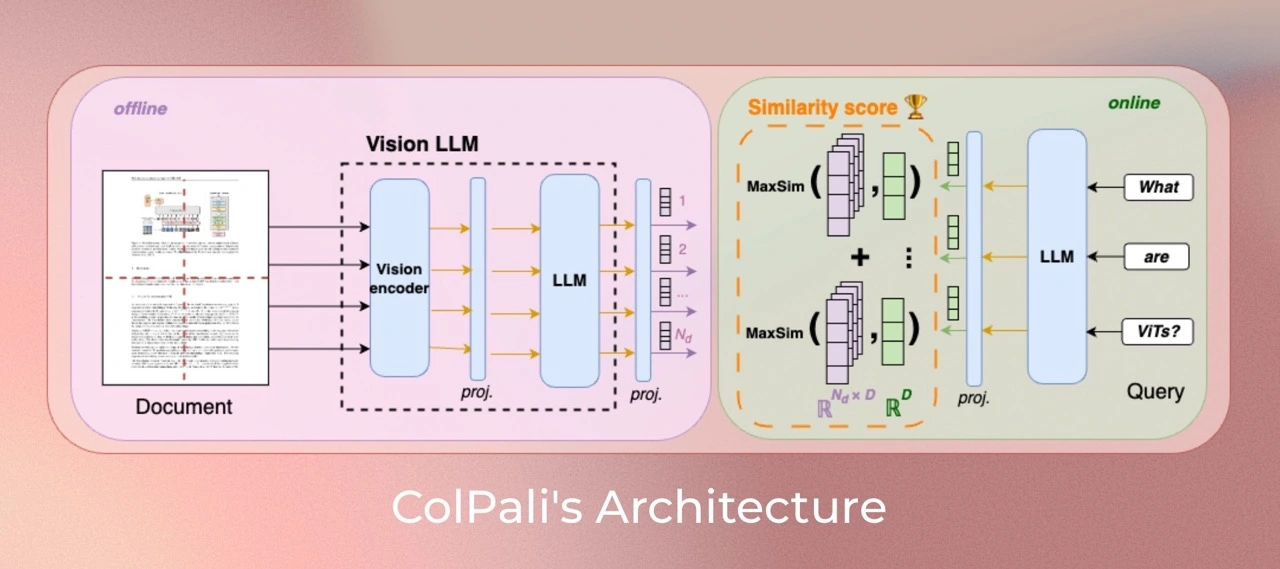

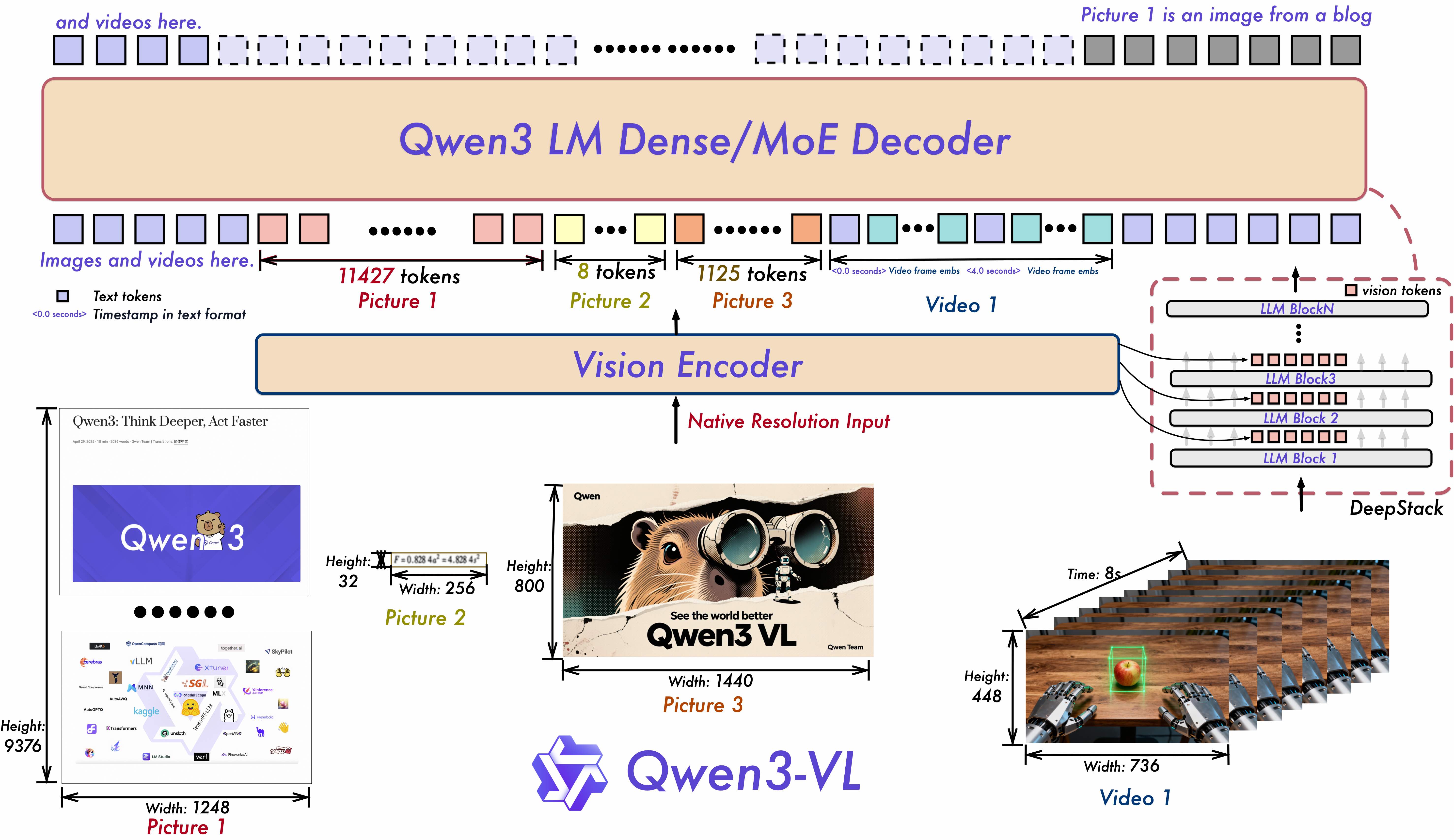

1. **Interleaved-MRoPE**: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning. 2. **DeepStack**: Fuses multi‑level ViT features to capture fine‑grained details and sharpen image–text alignment. 3. **Text–Timestamp Alignment:** Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling. This is the weight repository for Qwen3-VL-2B-Instruct. ## Model Training ### Dataset Our training dataset of 127,460 query-page pairs is comprised of train sets of openly available academic datasets (63%) and a synthetic dataset made up of pages from web-crawled PDF documents and augmented with VLM-generated (Claude-3 Sonnet) pseudo-questions (37%). Our training set is fully English by design, enabling us to study zero-shot generalization to non-English languages. We explicitly verify no multi-page PDF document is used both [*ViDoRe*](https://huggingface.co/collections/vidore/vidore-benchmark-667173f98e70a1c0fa4db00d) and in the train set to prevent evaluation contamination. A validation set is created with 2% of the samples to tune hyperparameters. *Note: Multilingual data is present in the pretraining corpus of the language model and most probably in the multimodal training.* ### Parameters All models are trained for **5 epochs** on the train set. Unless specified otherwise, we train models in `bfloat16` format, use low-rank adapters ([LoRA](https://arxiv.org/abs/2106.09685)) with `alpha=32` and `r=32` on the transformer layers from the language model, as well as the final randomly initialized projection layer, and use a `paged_adamw_8bit` optimizer. We train on 2*NVIDIA A100 80GB GPUs setup with data parallelism, a learning rate of 5e-5 with linear decay with 2.5% warmup steps, and a batch size of 64. ### ColQwen3-v0.1 Test Results ⚙️I used [mteb](https://github.com/illuin-tech/vidore-benchmark) to evaluate my ColQwen3-v0.1 retriever on the ViDoRe benchmark. | Model | ArxivQ | DocQ | InfoQ | TabF | TATQ | Shift | AI | Energy | Gov. | Health. | Avg. | |----------------------------------------|---------------------|---------------------|---------------------|--------------------|--------------------|--------------------|--------------------|--------------------|--------------------|--------------------|--------------------| | **`Unstructured` (text-only)** | | | | | | | | | | | | | - BM25 | - | 34.1 | - | - | 44.0 | 59.6 | 90.4 | 78.3 | 78.8 | 82.6 | - | | - BGE-M3 | - | 28.4 (↓5.7) | - | - | 36.1 (↓7.9) | 68.5 (↑8.9) | 88.4 (↓2.0) | 76.8 (↓1.5) | 77.7 (↓1.1) | 84.6 (↑2.0) | - | | **`Unstructured` + OCR** | | | | | | | | | | | | | - BM25 | 31.6 | 36.8 | 62.9 | 46.5 | 62.7 | 64.3 | 92.8 | 85.9 | 83.9 | 87.2 | 65.5 | | - BGE-M3 | 31.4 (↓0.2) | 25.7 (↓11.1) | 60.1 (↓2.8) | 70.8 (↑24.3) | 50.5 (↓12.2) | **73.2 (↑8.9)** | 90.2 (↓2.6) | 83.6 (↓2.3) | 84.9 (↑1.0) | 91.1 (↑3.9) | 66.1 (↑0.6) | | **`Unstructured` + Captioning** | | | | | | | | | | | | | - BM25 | 40.1 | 38.4 | 70.0 | 35.4 | 61.5 | 60.9 | 88.0 | 84.7 | 82.7 | 89.2 | 65.1 | | - BGE-M3 | 35.7 (↓4.4) | 32.9 (↓5.4) | 71.9 (↑1.9) | 69.1 (↑33.7) | 43.8 (↓17.7) | 73.1 (↑12.2) | 88.8 (↑0.8) | 83.3 (↓1.4) | 80.4 (↓2.3) | 91.3 (↑2.1) | 67.0 (↑1.9) | | **Contrastive VLMs** | | | | | | | | | | | | | Jina-CLIP | 25.4 | 11.9 | 35.5 | 20.2 | 3.3 | 3.8 | 15.2 | 19.7 | 21.4 | 20.8 | 17.7 | | Nomic-vision | 17.1 | 10.7 | 30.1 | 16.3 | 2.7 | 1.1 | 12.9 | 10.9 | 11.4 | 15.7 | 12.9 | | SigLIP (Vanilla) | 43.2 | 30.3 | 64.1 | 58.1 | 26.2 | 18.7 | 62.5 | 65.7 | 66.1 | 79.1 | 51.4 | | SigLIP (Vanilla) | 43.2 | 30.3 | 64.1 | 58.1 | 26.2 | 18.7 | 62.5 | 65.7 | 66.1 | 79.1 | 51.4 | | BiSigLIP (+fine-tuning) | 58.5 (↑15.3) | 32.9 (↑2.6) | 70.5 (↑6.4) | 62.7 (↑4.6) | 30.5 (↑4.3) | 26.5 (↑7.8) | 74.3 (↑11.8) | 73.7 (↑8.0) | 74.2 (↑8.1) | 82.3 (↑3.2) | 58.6 (↑7.2) | | BiPali (+LLM) | 56.5 (↓2.0) | 30.0 (↓2.9) | 67.4 (↓3.1) | 76.9 (↑14.2) | 33.4 (↑2.9) | 43.7 (↑17.2) | 71.2 (↓3.1) | 61.9 (↓11.7) | 73.8 (↓0.4) | 73.6 (↓8.8) | 58.8 (↑0.2) | | *ColPali* (+Late Inter.) | 79.1 (↑22.6) | 54.4 (↑24.5) | 81.8 (↑14.4) | 83.9 (↑7.0) | 65.8 (↑32.4) | 73.2 (↑29.5) | 96.2 (↑25.0) | 91.0 (↑29.1) | 92.7 (↑18.9) | 94.4 (↑20.8) | 81.3 (↑22.5) | | **Ours** | | | | | | | | | | | | | ***Colqwen3-v0.1* (+Late Inter.)** | **80.1 (↑1.0)** | **55.8 (↑1.4)** | **86.7 (↑5.9)** | 82.1 (↓1.8) | **70.8 (↑5.0)** | **75.9 (↑2.7)** | **99.1 (↑2.9)** | **95.6 (↑4.6)** | **96.1 (↑3.4)** | **96.8 (↑2.4)** | **83.9 (↑2.6)** | | ***Colqwen3-v0.2* (+Late Inter.)** | **85.7 (↑6.6)** | **54.6 (↑0.2)** | **86.2 (↑5.4)** | **87.3 (↑3.4)** | **75.8 (↑10.0)** | **82.0 (↑9.8)** | 99.1 (-) | **95.3 (↑4.3)** | **93.5 (↑0.8)** | **96.6 (↑2.2)** | **85.6 (↑4.3)** | ## Usage 🤗 Make sure `colpali-engine` is installed from source or with a version superior to 0.3.4. `transformers` version must be >= **4.57.1**.(compatible with Qwen3-VL interface) ```bash pip install git+https://github.com/Mungeryang/colqwen3 ``` ```python import torch from PIL import Image from transformers.utils.import_utils import is_flash_attn_2_available from colpali_engine.models import ColQwen3, ColQwen3Processor model = ColQwen3.from_pretrained( "goodman2001/colqwen3-v0.1", torch_dtype=torch.bfloat16, device_map="cuda:0", # or "mps" if on Apple Silicon attn_implementation="flash_attention_2" if is_flash_attn_2_available() else None, ).eval() processor = ColQwen3Processor.from_pretrained("goodman2001/colqwen3-v0.1") # Your inputs images = [ Image.new("RGB", (128, 128), color="white"), Image.new("RGB", (64, 32), color="black"), ] queries = [ "Is attention really all you need?", "What is the amount of bananas farmed in Salvador?", ] # Process the inputs batch_images = processor.process_images(images).to(model.device) batch_queries = processor.process_queries(queries).to(model.device) # Forward pass with torch.no_grad(): image_embeddings = model(**batch_images) query_embeddings = model(**batch_queries) scores = processor.score_multi_vector(query_embeddings, image_embeddings) ``` ## Limitations - **Focus**: The model primarily focuses on PDF-type documents and high-ressources languages, potentially limiting its generalization to other document types or less represented languages. - **Support**: The model relies on multi-vector retreiving derived from the ColBERT late interaction mechanism, which may require engineering efforts to adapt to widely used vector retrieval frameworks that lack native multi-vector support. ## License ColQwen3's vision language backbone model ([Qwen3-VL](https://github.com/QwenLM/Qwen3-VL) is under `apache2.0` license. The adapters attached to the model are under MIT license. ## Contact - Mungeryang: mungerygm@gmail.com/yangguimiao@iie.ac.cn ## Acknowledgments ❤️❤️❤️ > [!WARNING] > Thanks to the **Colpali team** and **Qwen team** for their excellent open-source works! > I accomplished this work by **standing on the shoulders of giants~** 👆👍

## Citation If you use any datasets or models from this organization in your research, please cite the original dataset as follows: ```bibtex @misc{faysse2024colpaliefficientdocumentretrieval, title={ColPali: Efficient Document Retrieval with Vision Language Models}, author={Manuel Faysse and Hugues Sibille and Tony Wu and Bilel Omrani and Gautier Viaud and Céline Hudelot and Pierre Colombo}, year={2024}, eprint={2407.01449}, archivePrefix={arXiv}, primaryClass={cs.IR}, url={https://arxiv.org/abs/2407.01449}, } ```