LEAD: Minimizing Learner–Expert Asymmetry in End-to-End Driving

⚠️ Coordinate System Warning

This model was trained in the left-handed coordinate system of CARLA (x-forward, y-right, z-up), not the ISO 8855 convention used by NAVSIM / nuPlan / most AD stacks (x-forward, y-left, z-up).

If you use

ltfv6.pydirectly, the predictedwaypointsandheadingsare in CARLA's left-handed frame. You must convert the planning output back to ISO 8855 before feeding it to any downstream planner, simulator, or evaluation tool that expects the right-handed convention.✅ Recommended: use the prepared NAVSIM workspaces

For correct, reproducible evaluation, Use one of the prepared workspaces below — they already wire up the model with the correct coordinate conversion, input preprocessing, and metric computation:

- NAVSIM v1.1:

3rd_party/navsim_workspace/navsimv1.1- NAVSIM v2.2:

3rd_party/navsim_workspace/navsimv2.2These are the only configurations we have validated end-to-end against the reported numbers. If you evaluate outside of them, results may silently disagree with the paper.

Manual conversion (only if you must integrate the model yourself)

waypoints_iso[..., 0] = waypoints_carla[..., 0] # x unchanged waypoints_iso[..., 1] = -waypoints_carla[..., 1] # flip y headings_iso = -headings_carla # flip yaw sign

Project Page | Paper | Code

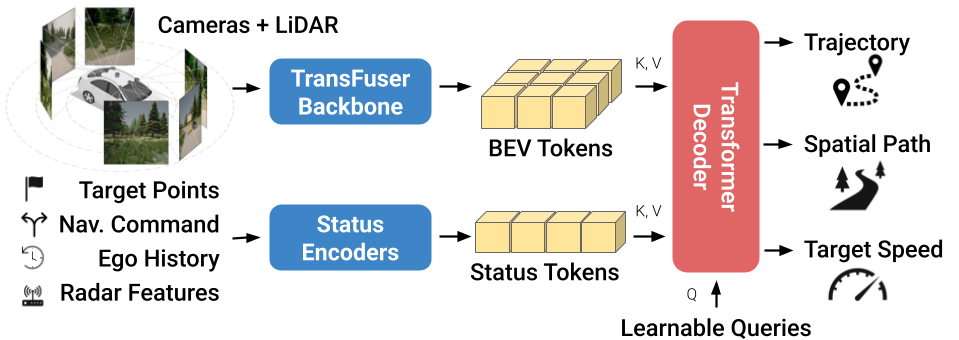

Official model weights for Latent TransFuser v6 (LTFv6), a NAVSIM checkpoint accompanies our paper LEAD: Minimizing Learner–Expert Asymmetry in End-to-End Driving.

We release the complete pipeline required to achieve state-of-the-art closed-loop performance on the Bench2Drive benchmark. Built around the CARLA simulator, the stack features a data-centric design with:

- Extensive visualization suite and runtime type validation for easier debugging.

- Optimized storage format, packs 72 hours of driving in ~200GB.

- Native support for NAVSIM and Waymo Vision-based E2E and extending those benchmarks through closed-loop simulation and synthetic data for additional supervision during training.

Find more information on https://github.com/autonomousvision/lead.

Usage

Install dependencies

pip install torch timm numpy opencv-python jaxtyping beartype omegaconf huggingface_hub

See example.ipynb to inspect data format and example inference.

Data Format

We also provide example NAVSIM cache here.

Input:

- RGB: (256, 1920, 3), range [0, 255]

- Command: [left, straight, right, unknown], e.g. [0, 1, 0, 0] for straight

- Speed: m/s

- Acceleration: m/s²

Output:

waypoints: (N, 2) predicted positionsheadings: (N,) predicted angles

Citation

If you find this work useful, please cite:

@inproceedings{Nguyen2026CVPR,

author = {Long Nguyen and Micha Fauth and Bernhard Jaeger and Daniel Dauner and Maximilian Igl and Andreas Geiger and Kashyap Chitta},

title = {LEAD: Minimizing Learner-Expert Asymmetry in End-to-End Driving},

booktitle = {Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2026},

}

- Downloads last month

- 123